Jennifer Kamrass confessed her worries to her therapist: her marriage, her finances, and self-esteem. Therapists are legally and ethically bound to confidentiality, but two years later, a transcript of every word Kamrass had typed to her psychologist using the app Talkspace was produced in court by her former employer.

“When I came to understand how much information they had, I was shocked,” Kamrass’ therapist said in an interview. The therapist declined to be named out of concern for her reputation.

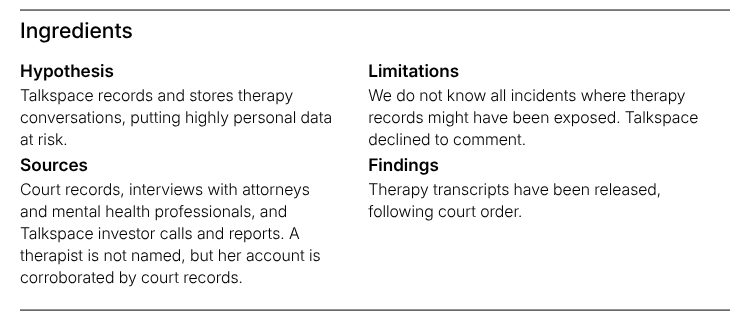

An investigation by Proof News found that therapy sessions on the telehealth platform Talkspace are at risk of exposure, according to lawyers and court records showing a person’s intimate conversations being used against them. The company records and stores text, video, and audio messages with clients and, as the company’s CEO recently told investors, has amassed “one of the largest mental health data banks in the world,” containing 140 million message exchanges. The end goal: training a soon-to-be-released AI therapy companion bot, according to reports the company released to investors.

Linda Michaels, a psychologist and co-founder of the nearly 7,000-member-backed advocacy group Psychotherapy Action Network, described the data mining as “awful.” In a traditional therapy session, therapists might scribble only a few sentences recording a patient's progress, she explained. By creating a transcript of the exact back-and-forth of a digital therapy session, Talkspace has created a new window into people’s private lives — that could be used against them.

“Privacy and confidentiality: It's in the code of ethics of every psychotherapist,” Michaels said. “It is really taking advantage of vulnerable people at a vulnerable time of their life.”

Talkspace executives assure investors data is anonymized, but experts say that such anonymity can be broken.

Kamrass’ case began after her employer, AdventHealth, terminated her nurse practitioner role in 2021, when she was nearly nine months pregnant. She began using Talkspace, a benefit provided by AdventHealth, to talk through worries about supporting her family and finding another nursing job so close to giving birth, court records show.

In the months that followed, Kamrass filed a pregnancy discrimination claim against her former employer. (A federal judge ultimately found the claim insufficiently supported and ruled in favor of AdventHealth, which argued the termination stemmed from the closure of a facility for financial reasons.) Kamrass’ therapist agreed to testify on her behalf, and as part of their defense strategy, the employer’s lawyers secured a court order for a litany of Kamrass’ Talkspace records, including her messages with her therapist.

“You’ve now got written evidence of everything discussed and in a normal therapy session that wouldn’t be true,” Peter Andreone, one of Kamrass’ lawyers, said. “They turned around and used it against her.”

AdventHealth declined to comment. Kamrass, the nurse, did not respond to requests for comment.

Talkspace also did not respond to requests. Talkspace executives emphasize to investors that the company is compliant with the Health Insurance Portability and Accountability Act, the nation’s best-known law governing patient privacy.

HIPAA requires people’s information be deidentified, including stripping out a person’s name, before it is shared. The law gives special protection to “psychotherapy notes,” according to the US Department of Health and Human Services, requiring patient consent before being disclosed for any reason, except when required by other laws.

The health care sector is frequently targeted by cyberattacks, industry experts warn, which leaves even anonymized data vulnerable.

“We know that information that's been anonymized can very easily be reidentified,” said Tori Noble, a staff attorney at the Electronic Frontier Foundation, which advocates for greater privacy protection. “[HIPAA] is not enough protection.”

Talkspace’s privacy practices have been questioned in the past. In 2022, U.S. Sens. Elizabeth Warren, Cory Booker, and Ron Wyden sent a letter to Talkspace expressing concerns about whether it allowed tech companies like Google and Facebook to use patient data. In response, John C. Reilly, the company’s chief legal officer, wrote that “all data related to their treatment is strictly used for therapeutic purposes,” and the company limits what data is used for ads.

Two years later, parent advocates accused the company of sharing personal information of New York City teens with Facebook, Amazon, Meta, Google, and Microsoft via website trackers.

Talkspace later agreed to amend its data collection policy with the city, which had contracted with the platform to provide services to teens. Beth Haroules, a senior staff attorney at the New York Civil Liberties Union who co-signed letters with the advocates, said the ordeal revealed a concerning gap in understanding of privacy issues by city officials.

“I don’t think the city understood what they were dealing with,” Haroules said.

In response, William Fowler, spokesperson for the New York City Department of Health and Mental Hygiene, which oversees the Talkspace deal, said the agency is “well-versed in patient privacy issues.” Fowler said they have taken steps to address privacy concerns, and that “use of data for any purpose other than providing the services is prohibited.”

Talkspace users agree to the company’s privacy policy, which discloses the use of chat, audio, and video communication to “develop new products.” European Union residents can opt out of certain personal data processing, the policy states, but US residents aren’t offered a similar provision. They can request their data be deleted.

“If you do not want us to share personal data or feel uncomfortable with the ways we use information in order to deliver our Services, please do not use the Services,” the policy states.

Research has shown time and time again that users do not read terms of service agreements. Jodi Halpern, a psychiatrist and professor of bioethics at the University of California, Berkeley who researches chatbots, said people give away “vulnerable information” without understanding the potential risks.

“It's very different than in actual human therapy where there's a lot of training about the informed consent process,” Halpern said. “They just click it.”

From Chat Therapy to Chatbot

Talkspace launched in 2012, promising to make therapy accessible to the masses by supplanting the usual 50-minute, in-person session for asynchronous texting with a provider. The company started by attracting users through ads on podcasts and social media, but then swept up millions of eligible customers with partnerships with insurance providers like UnitedHealth Group in 2019 and Cigna in 2020.

A push into treating teenagers followed, and the platform cut deals with cities and school districts, including Seattle, Baltimore County, and New York City. Universities and sororities had come into the fold as did a program for senior citizens and US military families.

By the end of last year, the platform boasted a network of approximately 200 million eligible patients. Their conversations form the basis of Talkspace’s vast mental health database. Speaking at a healthcare investment conference last year, Talkspace CEO Jon Cohen said the platform had compiled “8 billion words, 140 million messages, 6.2 million assessments.”

The data has helped train a "therapy companion” chatbot slated to be released later this year, Cohen said during a recent earnings call. Down the road, Cohen told investors, the company wants to secure insurance reimbursement for the automated tool.

“TalkAI does not replace clinicians, but rather extends their reach,” Cohen said. “We have strategically positioned ourselves to be a leader in the application of AI to mental health.”

In March, Universal Health Services Inc. announced it would acquire Talkspace for $835 million. The company describes itself as one of the largest healthcare providers in the country, operating 119 outpatient and 346 inpatient behavioral health facilities, where people in crisis can get medication and intensive therapy.

"This acquisition aligns with UHS' core growth objectives … delivering a comprehensive, technology-enabled continuum of care that supports innovative approaches to mental health,” Marc D. Miller, president and CEO of UHS, said in the deal’s announcement.

Psychologists are less bullish on the concept of chatbot therapy. Michaels, the psychologist and PsiAN co-founder, and other therapists Proof spoke to said therapy companion chatbots can be risky to patient well-being – and ultimately could be aiming to “replace therapists altogether.”

In 2019, before the proliferation of AI chatbots, Michaels co-authored a letter to the American Psychological Association, the main professional organization for the industry, outlining her concerns over Talkspace’s business practices, including inadequate patient privacy protection.

Weeks later, Talkspace’s lawyers filed a libel suit against her, her co-authors, and organization arguing their letters caused the APA to prohibit Talkspace from advertising at one of the APA’s largest conferences. Ultimately, the case was dismissed on jurisdictional grounds.

“This was just a bullying tactic to try to get us to shut up,” Michaels said.

Therapists also worry about client safety. Google and Character.AI, a developer of AI companions, agreed to settle a lawsuit brought by the mother of 14-year-old Sewell Setzer III, alleging the Florida teen’s last conversation on Character.AI encouraged his suicide.

Similar stories of bad behavior by AI companions have been recounted in Florida, Colorado, Oregon, and California, and experts have cautioned that the tech’s humanlike design encourages engagement, but chatbots lack safeguards for users in need of help.

Cohen, Talkspace’s CEO, has sought to differentiate TalkAI from other chatbots, which he said were “never built to support mental health.” Safe AI tools need to recognize delusions and distorted thinking, Cohen told investors, and the company has been testing a proprietary risk algorithm to flag situations where professionals need to swoop in.

Some states are looking to pump the brakes on therapy bots. The Illinois legislature banned therapy bots last year, and a California legislator proposed a similar measure in January. Unions representing therapists backed both measures.

Unions have also started demanding AI provisions in contracts. A union representing therapists at Kaiser Permanente, a large insurer and healthcare provider went on strike on March 18 after the company refused to prohibit AI tools from replacing therapists.

“It’s another version of them penny-pinching,” said Ilana Marcucci-Morris, a Kaiser therapist.